The Story

Parag K. MITAL, Ph.D. is an internationally exhibited artist and interdisciplinary researcher working between computational arts and machine learning practices for nearly 20 years. His work examines the nature of perception in both humans and computers, often exploring the disjunct between the two, through mediums such as generative film, augmented reality, and large-scale archival interpretations. The balance between his scientific and arts practice allows both to reflect on each other: the science driving the theories, and the artwork re-defining the questions asked within the research.

Motivations

Mital’s work is fundamentally motivated by inquiries around the nature of both human and computational perception:

How can we represent our ongoing audiovisual perception of the world? Could it ever be modeled by computers? If so, how does a computer’s perception differ from our own, and what can we gain or lose from those differences?

Foundations of Practice

Guided by these foundational questions about perception, Mital began to explore scientific research in eye-tracking, EEG, and fMRI, as well as computational modeling using Computer Vision and AI.

Foundations of Practice, Continued

Through artistic works of generative film, archival browsing, installation, and augmented reality, the computational models he built in the scientific setting are then synthesized and decoded, exposing gaps within the scientific theories and refining the questions he first sought to examine.

Audiovisual Understanding: Eye-Movements

The starting point of Mital’s investigations were in scientific studies of cognition with eye-movements, a real time index of cognition. The studies attempted to model and understand the influences of attention when viewing dynamic scenes.

From 2007 on, with Tim J. Smith and John Henderson et. al, Mital collected over 200 videos of human eye movements and accompanying audio files. He then built computational models of both what we look at and listen to (attention) and what we can describe versus not describe (representation) and wrote a thesis on both the auditory and visual models in an arts practice-based Ph.D. at Goldsmiths, University of London.

Archival Practice: The Daphne Oram Archives

Starting in 2010, Mital developed archival browsing techniques built on top of his auditory models and began to explore the Daphne Oram archive, a collection of 60 hours of found audio tapes. The intention of this work was to help archivists understand the audio collection and learn more about Oram’s techniques for creating sound.

Archival Practice: LA Philharmonic

With upgraded browsers to support the visualization of over 4 million audio fragments and 7 terabytes of data, Mital used his expanded auditory models to explore 100 years of audio from the LA Philharmonic during their centennial celebration.

The models are capable of listening to the entire archive, pulling out representations that it finds meaningful, and organizing the collection into a representational space that can be used to browse, annotate, and even play the archive as an instrument.

Archival Practice: LA Philharmonic, Public Art Symphony

These archival explorations led to a collaboration with Refik Anadol to turn 100 years of the Los Angeles Philharmonic audio into a public art symphony projected onto the Walt Disney Concert Hall.

YouTube Smash Up

Exploring the synthesis of archives, Mital re-applied his models to build the artwork entitled “YouTube Smash Up.” The intention of this artwork was to create a generative virus for YouTube which tried to resynthesize the #1 most viewed video using only content from the remaining Top 10 videos. The idea was to continue until it resynthesized itself.

Unfortunately, the videos all faced numerous copyright infringement claims and Mital had to stop the process. Before he could gain access to his account, he had to pass a quiz about copyright infringement with an accompanying video of YouTube’s Copyright School with Happy Tree Friends (which he then resynthesized using other HTF videos).

Abstractions

Mital’s “Abstractions” series represent the next stage of evolution of his computational practice as he explores the transformation of banal visual features into meditative artworks generated from the visual and auditory models he’s been developing for nearly 20 years. In this series, he explores the re-presentation of everyday features into artworks with striking visual complexity, reminiscent of the sacred geometries found in mosques, cathedrals, and iconic sacred spaces.

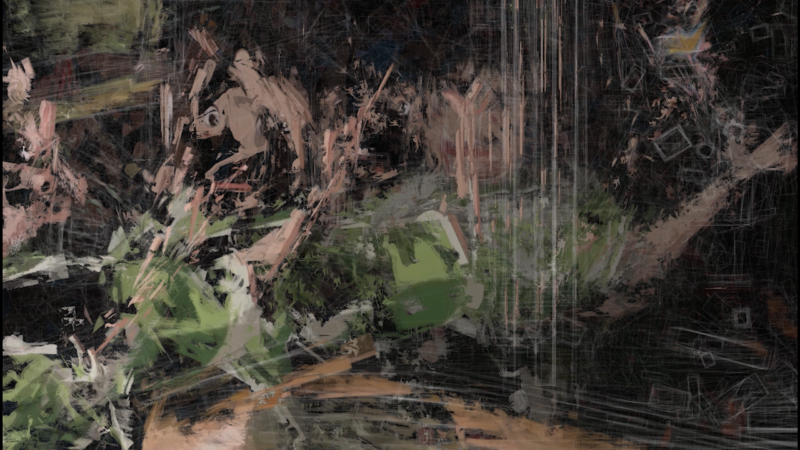

The Road to Bareilly, Pt. I

Mital’s artwork “The Road to Bareilly, Pt. I” is part of a new series of video works exploring themes of diaspora and immigration. The work follows the artist’s trip with his family to visit his mother’s hometown in India, and explores the sacrifices made by immigrants and their children, as well as the challenges of reconciling one’s roots with one’s present.

In the videos, Mital’s mother, who has now lived in the US longer than in India, reflects on her experience in Bareilly – the town where she grew up. She considers how it’s been transformed from the jungle she once knew into a busy, crowded metropolis. Mital reflects on the same sentiment, but also attempts to reconcile his associations to India with his fractured Indian American roots.

The associations and juxtapositions of the algorithmic collage are meant to highlight the fractured nature of perception, and how one’s memories almost become the paint for one’s reality.